The importance of third-party extensive testing is well-known, and every manufacturer with the slightest aspiration to get a good share in any market, global or domestic, must perform an assortment of lengthy and expensive tests that set the reliability bar considerably higher than standard IEC certification. Our test is not designed to substitute these “pro-level” tests, as it was designed to be low-cost, have a fast turnaround, and most importantly present the results using a “benchmarking” method, by employing the 1-100 scoring system, so that our readers can have a quick, meaningful, user-friendly view of where each product stands with respect to its peers.

Low-cost testing was never meant to be low-quality, and pv magazine, together with its partners, CEA, who has designed and supervises the test, and Gsolar, which operates the test lab, took every possible measure to ensure the high quality of the processes, and streamline the methodology. Of course, as is normal for a new concept, there have been some hurdles to overcome and bugs to iron out. A major modification, detailed in the December 2017 issue, involved a change in the grading system to provide a more granular ranking. That encouraged more manufacturers to participate, as the previous letter grading system (A to D) created an adverse perception to them. Also, the outdoor test has required lengthy preparation and is finally being rolled out in this edition (see pp. 100-102).

Quality insights

In the last 10 months we received 14 products from eight manufacturers for testing, and also tested two products from two manufacturers randomly selected from warehouses in China. We believe that the test sequence has shown its value in highlighting weaknesses in the products and providing insights into the performance, quality, and reliability characteristics of the tested products. This article will present the results of the tests in an anonymized fashion, as the manufacturers submitting modules for testing reserve the right to not disclose their names or the product names as long as the testing process is ongoing and results have not been published. So far, only four manufacturers have authorized the full publication of the test results for four of their products.

Level playing field

One important conclusion we reached early on was that our method of remote random sampling proved very effective. As explained in the September 2017 edition of pv magazine, CEA randomly selects five serial numbers of the samples from a batch of 2,000 modules, and the manufacturer has to submit electroluminescence (EL) images within two hours.

This serves the purpose of creating a level playing field for everyone, as it would be fairly easy for a manufacturer to inspect the samples, and replace the ones with problems with new ones, still using the same serial numbers. The two-hour limit makes this impossible. An EL image is like a fingerprint, as it has rich unique features resulting from print defects and process variations.

We discovered that in two separate cases, the samples we received and inspected had EL images with different patterns to the ones originally submitted by the manufacturer. The test was aborted, and the manufacturer was instructed to submit new samples. In another case involving a product from one of the aforementioned manufacturers, the lab EL images of the samples had very bad defects, and the manufacturer decided to abort the test.

As a result, a total of 13 products from 10 manufacturers were tested out of the 16 received or acquired.

Interesting observations

Interesting observations

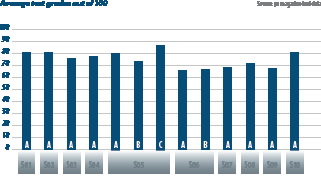

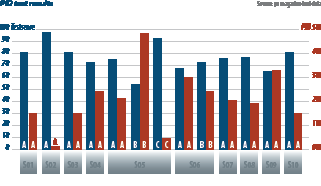

Looking at the first chart on this page, it is interesting to note that Manufacturer S05 submitted three products (A, B, and C), and got very high grades in the total six visual and EL inspections: five times a perfect grade of 100, and one still very high grade of 86. This shows a very good quality level in production.

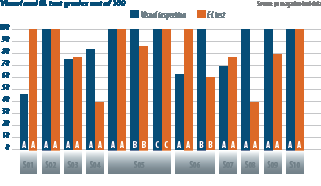

As shown on the middle chart, the product of manufacturer S01 has almost zero loss, which is very beneficial for northern installations with prevailing low light conditions. Manufacturer S04 has a negative loss, effectively a gain, meaning that the module efficiency improves in low light.

As shown on the middle chart, the product of manufacturer S01 has almost zero loss, which is very beneficial for northern installations with prevailing low light conditions. Manufacturer S04 has a negative loss, effectively a gain, meaning that the module efficiency improves in low light.

Manufacturers S05 and S10 also have very low losses. This can be explained by the advanced cell architectures employed, showing better efficiency in low light than conventional cell types.

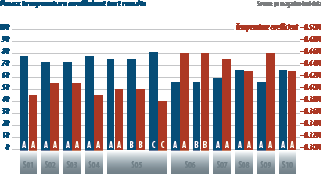

We observed that advanced cell architectures exhibited better temperature coefficients (lower absolute values – see bottom chart on p.98), typically between -0.40%/°C and -0.38%/°C, whereas conventional cells reached even as high as -0.46%/°C, which can be very detrimental to energy yield in hot climates.

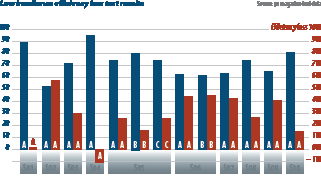

As we see in the chart to the right, manufacturer S02 had almost 0% PID, and this is due to a very special cell architecture that is not prone to PID. Manufacturer S05 had mixed results, with one sample showing very high degradation and another one showing very low. The high result may be due to an unsuitable encapsulant but is also amplified by the fact that the cell was bifacial, as bifacial modules are more sensitive to PID than monofacial modules.

As we see in the chart to the right, manufacturer S02 had almost 0% PID, and this is due to a very special cell architecture that is not prone to PID. Manufacturer S05 had mixed results, with one sample showing very high degradation and another one showing very low. The high result may be due to an unsuitable encapsulant but is also amplified by the fact that the cell was bifacial, as bifacial modules are more sensitive to PID than monofacial modules.

Another interesting observation, not depicted in the charts, is that testing for PID at 1,500 V has a non-proportional, enhanced effect on PID, which we observed when testing the same product at 1,000 V and 1,500 V, and observed a tripling of the degradation.

Finally, we do not have enough results yet on LID tests. This test is optional as it mandates on-site witnessing of the sample selection process at the factories, and most manufacturers have not opted for this yet. However, we expect that as PERC cells become the predominant technology, and manufacturers begin striving to prove that they have solved the well-known LID issue of PERC cells, we will have more LID results in the future.

Author: George Touloupas

This content is protected by copyright and may not be reused. If you want to cooperate with us and would like to reuse some of our content, please contact: editors@pv-magazine.com.